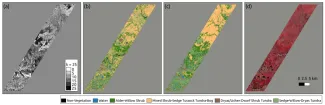

Accurate and high-resolution maps of vegetation provide critical characterization information for modeling and understanding terrestrial ecosystem processes and land-atmosphere interactions in heterogeneous Arctic ecosystems. However, most existing Arctic vegetation maps are at a coarse resolution and with a varying degree of detail and accuracy. Remote sensing-based approaches for mapping vegetation, while promising, are challenging in high latitude environments due to frequent cloud cover, polar darkness, and limited availability of high-resolution remote sensing datasets (e.g., <10 m). We developed a new remote sensing based multi-sensor data fusion approach for developing high-resolution maps of vegetation in the Kougarok area of the Seward Peninsula, Alaska. We explored optimal strategies to fuse data from a range of satellite-based sources, including hyperspectral, multi-spectral, and optical remote sensing and high resolution topography. Supervised and unsupervised classification techniques were applied to various combinations of these data to characterize the contributions of measurement strategies for distinguishing vegetation distributions on the landscape. Deep neural networks models were developed to mine the patterns of vegetation from high dimensional data. Vegetation maps were produced and validated against available maps and ground observations yielding accurate and high resolution (5 m) maps for NGEE-Arctic study sites.

Citation: Langford Z.L., J. Kumar and F.M. Hoffman (2017), Convolutional Neural Network Approach for Mapping Arctic Vegetation Using Multi-Sensor Remote Sensing Fusion, 2017 IEEE International Conference on Data Mining Workshops (ICDMW), pp. 322–331, New Orleans, Louisiana, USA, doi:10.1109/ICDMW.2017.48

For more information, please contact:

Jitu Kumar

kumarj@ornl.gov